Biology

- Anonymous Conservative [Michael Trust]. The Evolutionary Psychology Behind Politics. Macclenny, FL: Federalist Publications, [2012, 2014] 2017. ISBN 978-0-9829479-3-7.

-

One of the puzzles noted by observers of the contemporary

political and cultural scene is the division of the population

into two factions, (called in the sloppy terminology of the

United States) “liberal” and “conservative”,

and that if you pick a member from either faction by

observing his or her position on one of the divisive issues

of the time, you can, with a high probability of accuracy,

predict their preferences on all of a long list of other issues

which do not, on the face of it, seem to have very much to do

with one another. For example, here is a list of present-day

hot-button issues, presented in no particular order.

- Health care, socialised medicine

- Climate change, renewable energy

- School choice

- Gun control

- Higher education subsidies, debt relief

- Free speech (hate speech laws, Internet censorship)

- Deficit spending, debt, and entitlement reform

- Immigration

- Tax policy, redistribution

- Abortion

- Foreign interventions, military spending

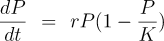

It's a maxim among popular science writers that every equation you include cuts your readership by a factor of two, so among the hardy half who remain, let's see how this works. It's really very simple (and indeed, far simpler than actual population dynamics in a real environment). The left side, “dP/dt” simply means “the rate of growth of the population P with respect to time, t”. On the right hand side, “rP” accounts for the increase (or decrease, if r is less than 0) in population, proportional to the current population. The population is limited by the carrying capacity of the habitat, K, which is modelled by the factor “(1 − P/K)”. Now think about how this works: when the population is very small, P/K will be close to zero and, subtracted from one, will yield a number very close to one. This, then, multiplied by the increase due to rP will have little effect and the growth will be largely unconstrained. As the population P grows and begins to approach K, however, P/K will approach unity and the factor will fall to zero, meaning that growth has completely stopped due to the population reaching the carrying capacity of the environment—it simply doesn't produce enough vegetation to feed any more rabbits. If the rabbit population overshoots, this factor will go negative and there will be a die-off which eventually brings the population P below the carrying capacity K. (Sorry if this seems tedious; one of the great things about learning even a very little about differential equations is that all of this is apparent at a glance from the equation once you get over the speed bump of understanding the notation and algebra involved.) This is grossly over-simplified. In fact, real populations are prone to oscillations and even chaotic dynamics, but we don't need to get into any of that for what follows, so I won't. Let's complicate things in our bunny paradise by introducing a population of wolves. The wolves can't eat the vegetation, since their digestive systems cannot extract nutrients from it, so their only source of food is the rabbits. Each wolf eats many rabbits every year, so a large rabbit population is required to support a modest number of wolves. Now if we go back and look at the equation for wolves, K represents the number of wolves the rabbit population can sustain, in the steady state, where the number of rabbits eaten by the wolves just balances the rabbits' rate of reproduction. This will often result in a rabbit population smaller than the carrying capacity of the environment, since their population is now constrained by wolf predation and not K. What happens as this (oversimplified) system cranks away, generation after generation, and Darwinian evolution kicks in? Evolution consists of two processes: variation, which is largely random, and selection, which is sensitively dependent upon the environment. The rabbits are unconstrained by K, the carrying capacity of their environment. If their numbers increase beyond a population P substantially smaller than K, the wolves will simply eat more of them and bring the population back down. The rabbit population, then, is not at all constrained by K, but rather by r: the rate at which they can produce new offspring. Population biologists call this an r-selected species: evolution will select for individuals who produce the largest number of progeny in the shortest time, and hence for a life cycle which minimises parental investment in offspring and against mating strategies, such as lifetime pair bonding, which would limit their numbers. Rabbits which produce fewer offspring will lose a larger fraction of them to predation (which affects all rabbits, essentially at random), and the genes which they carry will be selected out of the population. An r-selected population, sometimes referred to as r-strategists, will tend to be small, with short gestation time, high fertility (offspring per litter), rapid maturation to the point where offspring can reproduce, and broad distribution of offspring within the environment. Wolves operate under an entirely different set of constraints. Their entire food supply is the rabbits, and since it takes a lot of rabbits to keep a wolf going, there will be fewer wolves than rabbits. What this means, going back to the Verhulst equation, is that the 1 − P/K factor will largely determine their population: the carrying capacity K of the environment supports a much smaller population of wolves than their food source, rabbits, and if their rate of population growth r were to increase, it would simply mean that more wolves would starve due to insufficient prey. This results in an entirely different set of selection criteria driving their evolution: the wolves are said to be K-selected or K-strategists. A successful wolf (defined by evolution theory as more likely to pass its genes on to successive generations) is not one which can produce more offspring (who would merely starve by hitting the K limit before reproducing), but rather highly optimised predators, able to efficiently exploit the limited supply of rabbits, and to pass their genes on to a small number of offspring, produced infrequently, which require substantial investment by their parents to train them to hunt and, in many cases, acquire social skills to act as part of a group that hunts together. These K-selected species tend to be larger, live longer, have fewer offspring, and have parents who spend much more effort raising them and training them to be successful predators, either individually or as part of a pack. “K or r, r or K: once you've seen it, you can't look away.” Just as our island of bunnies and wolves was over-simplified, the dichotomy of r- and K-selection is rarely precisely observed in nature (although rabbits and wolves are pretty close to the extremes, which it why I chose them). Many species fall somewhere in the middle and, more importantly, are able to shift their strategy on the fly, much faster than evolution by natural selection, based upon the availability of resources. These r/K shape-shifters react to their environment. When resources are abundant, they adopt an r-strategy, but as their numbers approach the carrying capacity of their environment, shift to life cycles you'd expect from K-selection. What about humans? At a first glance, humans would seem to be a quintessentially K-selected species. We are large, have long lifespans (about twice as long as we “should” based upon the number of heartbeats per lifetime of other mammals), usually only produce one child (and occasionally two) per gestation, with around a one year turn-around between children, and massive investment by parents in raising infants to the point of minimal autonomy and many additional years before they become fully functional adults. Humans are “knowledge workers”, and whether they are hunter-gatherers, farmers, or denizens of cubicles at The Company, live largely by their wits, which are a combination of the innate capability of their hypertrophied brains and what they've learned in their long apprenticeship through childhood. Humans are not just predators on what they eat, but also on one another. They fight, and they fight in bands, which means that they either develop the social skills to defend themselves and meet their needs by raiding other, less competent groups, or get selected out in the fullness of evolutionary time. But humans are also highly adaptable. Since modern humans appeared some time between fifty and two hundred thousand years ago they have survived, prospered, proliferated, and spread into almost every habitable region of the Earth. They have been hunter-gatherers, farmers, warriors, city-builders, conquerors, explorers, colonisers, traders, inventors, industrialists, financiers, managers, and, in the Final Days of their species, WordPress site administrators. In many species, the selection of a predominantly r or K strategy is a mix of genetics and switches that get set based upon experience in the environment. It is reasonable to expect that humans, with their large brains and ability to override inherited instinct, would be especially sensitive to signals directing them to one or the other strategy. Now, finally, we get back to politics. This was a post about politics. I hope you've been thinking about it as we spent time in the island of bunnies and wolves, the cruel realities of natural selection, and the arcana of differential equations. What does r-selection produce in a human population? Well, it might, say, be averse to competition and all means of selection by measures of performance. It would favour the production of large numbers of offspring at an early age, by early onset of mating, promiscuity, and the raising of children by single mothers with minimal investment by them and little or none by the fathers (leaving the raising of children to the State). It would welcome other r-selected people into the community, and hence favour immigration from heavily r populations. It would oppose any kind of selection based upon performance, whether by intelligence tests, academic records, physical fitness, or job performance. It would strive to create the ideal r environment of unlimited resources, where all were provided all their basic needs without having to do anything but consume. It would oppose and be repelled by the K component of the population, seeking to marginalise it as toxic, privileged, or exploiters of the real people. It might even welcome conflict with K warriors of adversaries to reduce their numbers in otherwise pointless foreign adventures. And K-troop? Once a society in which they initially predominated creates sufficient wealth to support a burgeoning r population, they will find themselves outnumbered and outvoted, especially once the r wave removes the firebreaks put in place when K was king to guard against majoritarian rule by an urban underclass. The K population will continue to do what they do best: preserving the institutions and infrastructure which sustain life, defending the society in the military, building and running businesses, creating the basic science and technologies to cope with emerging problems and expand the human potential, and governing an increasingly complex society made up, with every generation, of a population, and voters, who are fundamentally unlike them. Note that the r/K model completely explains the “crunchy to soggy” evolution of societies which has been remarked upon since antiquity. Human societies always start out, as our genetic heritage predisposes us to, K-selected. We work to better our condition and turn our large brains to problem-solving and, before long, the privation our ancestors endured turns into a pretty good life and then, eventually, abundance. But abundance is what selects for the r strategy. Those who would not have reproduced, or have as many children in the K days of yore, now have babies-a-poppin' as in the introduction to Idiocracy, and before long, not waiting for genetics to do its inexorable work, but purely by a shift in incentives, the rs outvote the Ks and the Ks begin to count the days until their society runs out of the wealth which can be plundered from them. But recall that equation. In our simple bunnies and wolves model, the resources of the island were static. Nothing the wolves could do would increase K and permit a larger rabbit and wolf population. This isn't the case for humans. K humans dramatically increase the carrying capacity of their environment by inventing new technologies such as agriculture, selective breeding of plants and animals, discovering and exploiting new energy sources such as firewood, coal, and petroleum, and exploring and settling new territories and environments which may require their discoveries to render habitable. The rs don't do these things. And as the rs predominate and take control, this momentum stalls and begins to recede. Then the hard times ensue. As Heinlein said many years ago, “This is known as bad luck.” And then the Gods of the Copybook Headings will, with terror and slaughter return. And K-selection will, with them, again assert itself. Is this a complete model, a Rosetta stone for human behaviour? I think not: there are a number of things it doesn't explain, and the shifts in behaviour based upon incentives are much too fast to account for by genetics. Still, when you look at those eleven issues I listed so many words ago through the r/K perspective, you can almost immediately see how each strategy maps onto one side or the other of each one, and they are consistent with the policy preferences of “liberals” and “conservatives”. There is also some rather fuzzy evidence for genetic differences (in particular the DRD4-7R allele of the dopamine receptor and size of the right brain amygdala) which appear to correlate with ideology. Still, if you're on one side of the ideological divide and confronted with somebody on the other and try to argue from facts and logical inference, you may end up throwing up your hands (if not your breakfast) and saying, “They just don't get it!” Perhaps they don't. Perhaps they can't. Perhaps there's a difference between you and them as great as that between rabbits and wolves, which can't be worked out by predator and prey sitting down and voting on what to have for dinner. This may not be a hopeful view of the political prospect in the near future, but hope is not a strategy and to survive and prosper requires accepting reality as it is and acting accordingly.

- Barlow, Connie. The Ghosts of Evolution. New York: Basic Books, 2000. ISBN 0-465-00552-7.

- Ponder the pit of the avocado; no, actually ponder it—hold it in your hand and get a sense of how big and heavy it is. Now consider that due to its toughness, slick surface, and being laced with toxins, it was meant to be swallowed whole and deposited far from the tree in the dung of the animal who gulped down the entire fruit, pit and all. Just imagine the size of the gullet (and internal tubing) that requires—what on Earth, or more precisely, given the avocado's range, what in the Americas served to disperse these seeds prior to the arrival of humans some 13,000 years ago? The Western Hemisphere was, in fact, prior to the great extinction at the end of the Pleistocene, (coincident with the arrival of humans across the land bridge with Asia, and probably the result of their intensive hunting), home to a rich collection of megafauna: mammoths and mastodons, enormous ground sloths, camels, the original horses, and an armadillo as large as a bear, now all gone. Plants with fruit which doesn't seem to make any sense—which rots beneath the tree and isn't dispersed by any extant creature—may be the orphaned ecological partners of extinct species with which they co-evolved. Plants, particularly perennials and those which can reproduce clonally, evolve much more slowly than mammal and bird species, and may survive, albeit in a limited or spotty range, through secondary dispersers of their seeds (seed hoarders and predators, water, and gravity) long after the animal vectors their seeds evolved to employ have departed the scene. That is the fascinating premise of this book, which examines how enigmatic, apparently nonsensical fruit such as the osage orange, Kentucky coffee tree, honey locust, ginkgo, desert gourd, and others may be, figuratively, ripening their fruit every year waiting for the passing mastodon or megatherium which never arrives, some surviving because they are attractive, useful, and/or tasty to the talking apes who killed off the megafauna. All of this is very interesting, and along the way one learns a great deal about the co-evolution of plants and their seed dispersal partners and predators—an endless arms race involving armour, chemical warfare (selective toxins and deterrents in pulp and seeds), stealth, and co-optation (burrs which hitch a ride on the fur of animals). However, this 250 page volume is basically an 85 page essay struggling to get out of the rambling, repetitious, self-indulgent, pretentious prose and unbridled speculations of the author, which results in a literary bolus as difficult to masticate as the seed pods of some of the plants described therein. This book desperately needed the attention of an editor ready to wield the red pencil and Basic Books, generally a quality publisher of popularisations of science, dropped the ball (or, perhaps I should say, spit out the seed) here. The organisation of the text is atrocious—we encounter the same material over and over, frequently see technical terms such as indehiscent used four or five times before they are first defined, only to then endure a half-dozen subsequent definitions of the same word (a brief glossary of botanical terms would be a great improvement), and on occasions botanical jargon is used apparently because it rolls so majestically off the tongue or lends authority to the account—which authority is sorely lacking. While there is serious science and well-documented, peer-reviewed evidence for anachronism in certain fruits, Barlow uses the concept as a launching pad for wild speculation in which any apparent lack of perfect adaptation between a plant and its present-day environment is taken as evidence for an extinct ecological partner. One of many examples is the suggestion on p. 164 that the fact that the American holly tree produces spiny leaves well above the level of any current browser (deer here, not Internet Exploder or Netscrape!) is evidence it evolved to defend itself against much larger herbivores. Well, maybe, but it may just be that a tree lacks the means to precisely measure the distance from the ground, and those which err on the side of safety are more likely to survive. The discussion of evolution throughout is laced with teleological and anthropomorphic metaphors which will induce teeth-grinding among Darwinists audible across a large lecture hall. At the start of chapter 8, vertebrate paleontologist Richard Tedford is quoted as saying, “Frankly, this is not really science. You haven't got a way of testing any of this. It's more metaphysics.”—amen. The author tests the toxicity of ginkgo seeds by feeding them to squirrels in a park in New York City (“All the world seems in tune, on a spring afternoon…”), and the attractiveness of maggot-ridden overripe pawpaw fruit by leaving it outside her New Mexico trailer for frequent visitor Mrs. Foxie (you can't make up stuff like this) and, in the morning, it was gone! I recall a similar experiment from childhood involving milk, cookies, and flying reindeer; she does, admittedly, acknowledge that skunks or raccoons might have been responsible. There's an extended discourse on the possible merits of eating dirt, especially for pregnant women, then in the very next chapter the suggestion that the honey locust has “devolved” into the swamp locust, accompanied by an end note observing that a professional botanist expert in the genus considers this nonsense. Don't get me wrong, there's plenty of interesting material here, and much to think about in the complex intertwined evolution of animals and plants, but this is a topic which deserves a more disciplined author and a better book.

- Behe, Michael J., William A. Dembski, and Stephen C. Meyer. Science and Evidence for Design in the Universe. San Francisco: Ignatius Press, 2000. ISBN 0-89870-809-5.

- Brink, Anthony. Debating AZT: Mbeki and the AIDS Drug Controversy. Pietermaritzburg, South Africa: Open Books, 2000. ISBN 0-620-26177-3.

- I bought this volume in a bookshop in South Africa; none of the principal on-line booksellers have ever heard of it. The complete book is now available on the Web.

- Cochran, Gregory and Henry Harpending. The 10,000 Year Explosion. New York: Basic Books, 2009. ISBN 978-0-465-00221-4.

- “Only an intellectual could believe something so stupid” most definitely applies to the conventional wisdom among anthropologists and social scientists that human evolution somehow came to an end around 40,000 years ago with the emergence of modern humans and that differences among human population groups today are only “skin deep”: the basic physical, genetic, and cognitive toolkit of humans around the globe is essentially identical, with only historical contingency and cultural inheritance responsible for different outcomes. To anybody acquainted with evolutionary theory, this should have been dismissed as ideologically motivated nonsensical propaganda on the face of it. Evolution is driven by changes and new challenges faced by a species as it moves into new niches and environments, adapts to environmental change, migrates and encounters new competition, and is afflicted by new diseases which select for those with immunity. Modern humans, in their expansion from Africa to almost every habitable part of the globe, have endured changes and challenges which dwarf those of almost any other metazoan species. It stands to reason, then, that the pace of human evolution, far from coming to a halt, would in fact accelerate dramatically, as natural selection was driven by the coming and going of ice ages, the development of agriculture and domestication of animals, spread of humans into environments inhospitable to their ancestors, trade and conquest resulting in the mixing of genes among populations, and numerous other factors. Fortunately, we're lucky to live in an age in which we need no longer speculate upon such matters. The ability to sequence the human genome and compare the lineage of genes in various populations has created the field of genetic anthropology, which is in the process of transforming what was once a “soft science” into a thoroughly quantitative discipline where theories can be readily falsified by evidence in the genome. This book has the potential of creating a phase transition in anthropology: it is a manifesto for the genomic revolution, and a few years from now anthropologists who ignore the kind of evidence presented here will be increasingly forgotten, publishing papers nobody reads because they neglect the irrefutable evidence of human history we carry in our genes. The authors are very ambitious in their claims, and I'm sure that some years from now they will be seen to have overreached in some of them. But the central message will, I am confident, stand: human evolution has dramatically accelerated since the emergence of modern humans, and is being driven at an ever faster pace by the cultural and environmental changes humans are incessantly confronting. Further, human history cannot be understood without first acknowledging that the human populations which were the actors in it were fundamentally different. The conquest of the Americas by Europeans may well not have happened had not Europeans carried genes which protected them against the infectuous diseases they also carried on their voyages of exploration and conquest. (By some estimates, indigenous populations in the Americas fell to 10% of their pre-contact levels, precipitating societal collapse.) Why do about half of all humans on Earth speak languages of the Indo-European group? Well, it may be because the obscure cattle herders from the steppes who spoke the ur-language happened to evolve a gene which made them lactose tolerant throughout adulthood, and hence were able to raise cattle for dairy products, which is five times as productive (measured by calories per unit area) as raising cattle for meat. While Europeans' immunity to disease served them well in their conquest of the Americas, their lack of immunity to diseases endemic in sub-Saharan Africa (in particular, falciparum malaria) rendered initial attempts colonise that region disastrous. The authors do not hesitate to speculate on possible genetic influences on events in human history, but their conjectures are based upon published genetic evidence, cited from primary sources in the extensive end notes. A number of these discussions may lead to the sound of skulls exploding among those wedded to the dominant academic dogma. The authors suggest that some of the genes which allowed modern humans emerging from Africa to prosper in northern climes were the result of cross-breeding with Neanderthals; that just as domestication of animals results in neoteny, domestication of humans in agricultural and the consequent state societies has induced neotenous changes in “domesticated humans” which result in populations with a long history of living in agricultural societies adapting better to modern civilisation than those without that selection in their genetic heritage, and that the unique experience of selection for success in intellectually demanding professions and lack of interbreeding resulted in the emergence of the Ashkenazi Jews as a population whose mean intelligence exceeds that of all other human populations (as well as a prevalence of genetic diseases which appear linked to biochemical factors related to brain function). There's an odd kind of doublethink present among many champions of evolutionary theory. While invoking evolution to explain even those aspects of the history of life on Earth where doing so involves what can only be called a “leap of faith”, they dismiss the self-evident consequences of natural selection on populations of their own species. Certainly, all humans constitute a single species: we can interbreed, and that's the definition. But all dogs and wolves can interbreed, yet nobody would say that there is no difference between a Great Dane and a Dachshund. Largely isolated human populations have been subjected to unique selective pressures from their environment, diet, diseases, conflict, culture, and competition, and it's nonsense to argue that these challenges did not drive selection of adaptive alleles among the population. This book is a welcome shot across the bow of the “we're all the same” anthropological dogma, and provides a guide to the discoveries to be made as comparative genetics lays a firm scientific foundation for anthropology.

- Darling, David J. Life Everywhere: The Maverick Science of Astrobiology. New York: Basic Books, 2001. ISBN 0-465-01563-8.

- Dembski, William A. No Free Lunch. Lanham, MD: Rowan & Littlefield, 2002. ISBN 0-7425-1297-5.

-

It seems to be the rule that the softer the science,

the more rigid and vociferously enforced the dogma. Physicists,

confident of what they do know and cognisant of how much they

still don't, have no problems with speculative theories of

parallel universes,

wormholes and time machines, and

inconstant physical constants.

But express the slightest scepticism about Darwinian evolution being

the one, completely correct, absolutely established beyond a shadow

of a doubt, comprehensive and exclusive explanation for the emergence

of complexity and diversity in life on Earth, and outraged

biologists run to the courts, the legislature, and the media to

suppress the heresy, accusing those who dare to doubt their dogma

as being benighted opponents of science seeking to impose a

“theocracy”. Funny, I thought science progressed by putting theories

to the test, and that all theories were provisional, subject to

falsification by experimental evidence or replacement by a more

comprehensive theory which explains additional phenomena and/or

requires fewer arbitrary assumptions.

In this book, mathematician and philosopher William A. Dembski attempts to lay the mathematical and logical foundation for inferring the presence of intelligent design in biology. Note that “intelligent design” needn't imply divine or supernatural intervention—the “directed panspermia” theory of the origin of life proposed by co-discoverer of the structure of DNA and Nobel Prize winner Francis Crick is a theory of intelligent design which invokes no deity, and my perpetually unfinished work The Rube Goldberg Variations and the science fiction story upon which it is based involve searches for evidence of design in scientific data, not in scripture.

You certainly won't find any theology here. What you will find is logical and mathematical arguments which sometimes ascend (or descend, if you wish) into prose like (p. 153), “Thus, if P characterizes the probability of E0 occurring and f characterizes the physical process that led from E0 to E1, then P∘f −1 characterizes the probability of E1 occurring and P(E0) ≤ P∘f −1(E1) since f(E0) = E1 and thus E0 ⊂ f −1(E1).” OK, I did cherry-pick that sentence from a particularly technical section which the author advises readers to skip if they're willing to accept the less formal argument already presented. Technical arguments are well-supplemented by analogies and examples throughout the text.

Dembski argues that what he terms “complex specified information” is conclusive evidence for the presence of design. Complexity (the Shannon information measure) is insufficient—all possible outcomes of flipping a coin 100 times in a row are equally probable—but presented with a sequence of all heads, all tails, alternating heads and tails, or a pattern in which heads occurred only for prime numbered flips, the evidence for design (in this case, cheating or an unfair coin) would be considered overwhelming. Complex information is considered specified if it is compressible in the sense of Chaitin-Kolmogorov-Solomonoff algorithmic information theory, which measures the randomness of a bit string by the length of the shortest computer program which could produce it. The overwhelming majority of 100 bit strings cannot be expressed more compactly than simply by listing the bits; the examples given above, however, are all highly compressible. This is the kind of measure, albeit not rigorously computed, which SETI researchers would use to identify a signal as of intelligent origin, which courts apply in intellectual property cases to decide whether similarity is accidental or deliberate copying, and archaeologists use to determine whether an artefact is of natural or human origin. Only when one starts asking these kinds of questions about biology and the origin of life does controversy erupt!

Chapter 3 proposes a “Law of Conservation of Information” which, if you accept it, would appear to rule out the generation of additional complex specified information by the process of Darwinian evolution. This would mean that while evolution can and does account for the development of resistance to antibiotics in bacteria and pesticides in insects, modification of colouration and pattern due to changes in environment, and all the other well-confirmed cases of the Darwinian mechanism, that innovation of entirely novel and irreducibly complex (see chapter 5) mechanisms such as the bacterial flagellum require some external input of the complex specified information they embody. Well, maybe…but one should remember that conservation laws in science, unlike invariants in mathematics, are empirical observations which can be falsified by a single counter-example. Niels Bohr, for example, prior to its explanation due to the neutrino, theorised that the energy spectrum of nuclear beta decay could be due to a violation of conservation of energy, and his theory was taken seriously until ruled out by experiment.

Let's suppose, for the sake of argument, that Darwinian evolution does explain the emergence of all the complexity of the Earth's biosphere, starting with a single primordial replicating lifeform. Then one still must explain how that replicator came to be in the first place (since Darwinian evolution cannot work on non-replicating organisms), and where the information embodied in its molecular structure came from. The smallest present-day bacterial genomes belong to symbiotic or parasitic species, and are in the neighbourhood of 500,000 base pairs, or roughly 1 megabit of information. Even granting that the ancestral organism might have been much smaller and simpler, it is difficult to imagine a replicator capable of Darwinian evolution with an information content 1000 times smaller than these bacteria, Yet randomly assembling even 500 bits of precisely specified information seems to be beyond the capacity of the universe we inhabit. If you imagine every one of the approximately 1080 elementary particles in the universe trying combinations every Planck interval, 1045 times every second, it would still take about a billion times the present age of the universe to randomly discover a 500 bit pattern. Of course, there are doubtless many patterns which would work, but when you consider how conservative all the assumptions are which go into this estimate, and reflect upon the evidence that life seemed to appear on Earth just about as early as environmental conditions permitted it to exist, it's pretty clear that glib claims that evolution explains everything and there are just a few details to be sorted out are arm-waving at best and propaganda at worst, and that it's far too early to exclude any plausible theory which could explain the mystery of the origin of life. Although there are many points in this book with which you may take issue, and it does not claim in any way to provide answers, it is valuable in understanding just how difficult the problem is and how many holes exist in other, more accepted, explanations. A clear challenge posed to purely naturalistic explanations of the origin of terrestrial life is to suggest a prebiotic mechanism which can assemble adequate specified information (say, 500 bits as the absolute minimum) to serve as a primordial replicator from the materials available on the early Earth in the time between the final catastrophic bombardment and the first evidence for early life.

- Duesberg, Peter H. Inventing the AIDS Virus. Washington: Regnery, 1996. ISBN 0-89526-470-6.

- Dyson, Freeman J. The Sun, the Genome, and the Internet. Oxford: Oxford University Press, 1999. ISBN 0-19-513922-4.

- The text in this book is set in a hideous flavour of the Adobe Caslon font in which little curlicue ligatures connect the letter pairs “ct” and “st” and, in addition, the “ligatures” for “ff”, “fi”, “fl”, and “ft” lop off most of the bar of the “f”, leaving it looking like a droopy “l”. This might have been elegant for chapter titles, but it's way over the top for body copy. Dyson's writing, of course, more than redeems the bad typography, but you gotta wonder why we couldn't have had the former without the latter.

- Dyson, Freeman J. Origins of Life. 2nd. ed. Cambridge: Cambridge University Press, 1999. ISBN 0-521-62668-4.

- The years which followed Freeman Dyson's 1985 Tarner lectures, published in the first edition of Origins of Life that year, saw tremendous progress in molecular biology, including the determination of the complete nucleotide sequences of organisms ranging from E. coli to H. sapiens, and a variety of evidence indicating the importance of Archaea and the deep, hot biosphere to theories of the origin of life. In this extensively revised second edition, Dyson incorporates subsequent work relevant to his double-origin (metabolism first, replication later) hypothesis. It's perhaps indicative of how difficult the problem of the origin of life is that none of the multitude of experiments done in the almost 20 years since Dyson's original lectures has substantially confirmed or denied his theory nor answered any of the explicit questions he posed as challenges to experimenters.

- Entine, Jon. Taboo. New York: PublicAffairs, 2000. ISBN 1-58648-026-X.

-

A certain segment of the dogma-based community of postmodern academics and their hangers-on seems to have no difficulty whatsoever believing that Darwinian evolution explains every aspect of the origin and diversification of life on Earth while, at the same time, denying that genetics—the mechanism which underlies evolution—plays any part in differentiating groups of humans. Doublethink is easy if you never think at all. Among those to whom evidence matters, here's a pretty astonishing fact to ponder. In the last four Olympic games prior to the publication of this book in the year 2000, there were thirty-two finalists in the men's 100-metre sprint. All thirty-two were of West African descent—a region which accounts for just 8% of the world's population. If finalists in this event were randomly chosen from the entire global population, the probability of this concentration occurring by chance is 0.0832 or about 8×10−36, which is significant at the level of more than twelve standard deviations. The hardest of results in the flintiest of sciences—null tests of conservation laws and the like—are rarely significant above 7 to 8 standard deviations.

Now one can certainly imagine any number of cultural and other non-genetic factors which predispose those with West African ancestry toward world-class performance in sprinting, but twelve standard deviations? The fact that running is something all humans do without being taught, and that training for running doesn't require any complicated or expensive equipment (as opposed to sports such as swimming, high-diving, rowing, or equestrian events), and that champions of West African ancestry hail from countries around the world, should suggest a genetic component to all but the most blinkered of blank slaters.

Taboo explores the reality of racial differences in performance in various sports, and the long and often sordid entangled histories of race and sports, including the tawdry story of race science and eugenics, over-reaction to which has made most discussion of human biodiversity, as the title of book says, taboo. The equally forbidden subject of inherent differences in male and female athletic performance is delved into as well, with a look at the hormone dripping “babes from Berlin” manufactured by the cruel and exploitive East German sports machine before the collapse of that dismal and unlamented tyranny.

Those who know some statistics will have no difficulty understanding what's going on here—the graph on page 255 tells the whole story. I wish the book had gone into a little more depth about the phenomenon of a slight shift in the mean performance of a group—much smaller than individual variation—causing a huge difference in the number of group members found in the extreme tail of a normal distribution. Another valuable, albeit speculative, insight is that if one supposes that there are genes which confer advantage to competitors in certain athletic events, then given the intense winnowing process world-class athletes pass through before they reach the starting line at the Olympics, it is plausible all of them at that level possess every favourable gene, and that the winner is determined by training, will to win, strategy, individual differences, and luck, just as one assumed before genetics got mixed up in the matter. It's just that if you don't have the genes (just as if your legs aren't long enough to be a runner), you don't get anywhere near that level of competition.

Unless research in these areas is suppressed due to an ill-considered political agenda, it is likely that the key genetic components of athletic performance will be identified in the next couple of decades. Will this mean that world-class athletic competition can be replaced by DNA tests? Of course not—it's just that one factor in the feedback loop of genetic endowment, cultural reinforcement of activities in which group members excel, and the individual striving for excellence which makes competitors into champions will be better understood.

- Gamow, George. One, Two, Three…Infinity. Mineola, NY: Dover, [1947] 1961. rev. ed. ISBN 0-486-25664-2.

- This book, which first I read at around age twelve, rekindled my native interest in mathematics and science which had, by then, been almost entirely extinguished by six years of that intellectual torture called “classroom instruction”. Gamow was an eminent physicist: among other things, he advocated the big bang theory decades before it became fashionable, originated the concept of big bang nucleosynthesis, predicted the cosmic microwave background radiation 16 years before it was discovered, proposed the liquid drop model of the atomic nucleus, worked extensively in the astrophysics of energy production in stars, and even designed a nuclear bomb (“Greenhouse George”), which initiated the first deuterium-tritium fusion reaction here on Earth. But he was also one of most talented popularisers of science in the twentieth century, with a total of 18 popular science books published between 1939 and 1967, including the Mr Tompkins series, timeless classics which inspired many of the science visualisation projects at this site, in particular C-ship. A talented cartoonist as well, 128 of his delightful pen and ink drawings grace this volume. For a work published in 1947 with relatively minor revisions in the 1961 edition, this book has withstood the test of time remarkably well—Gamow was both wise and lucky in his choice of topics. Certainly, nobody should consider this book a survey of present-day science, but for folks well-grounded in contemporary orthodoxy, it's a delightful period piece providing a glimpse of the scientific world view of almost a half-century ago as explained by a master of the art. This Dover paperback is an unabridged reprint of the 1961 revised edition.

- Gold, Thomas. The Deep Hot Biosphere. New York: Copernicus, 1999. ISBN 0-387-98546-8.

- Gordon, Deborah M. Ants at Work. New York: The Free Press, 1999. ISBN 0-684-85733-2.

- Hawkins, Jeff with Sandra Blakeslee. On Intelligence. New York: Times Books, 2004. ISBN 0-8050-7456-2.

- Ever since the early days of research into the sub-topic of computer science which styles itself “artificial intelligence”, such work has been criticised by philosophers, biologists, and neuroscientists who argue that while symbolic manipulation, database retrieval, and logical computation may be able to mimic, to some limited extent, the behaviour of an intelligent being, in no case does the computer understand the problem it is solving in the sense a human does. John R. Searle's “Chinese Room” thought experiment is one of the best known and extensively debated of these criticisms, but there are many others just as cogent and difficult to refute. These days, criticising artificial intelligence verges on hunting cows with a bazooka—unlike the early days in the 1950s when everybody expected the world chess championship to be held by a computer within five or ten years and mathematicians were fretting over what they'd do with their lives once computers learnt to discover and prove theorems thousands of times faster than they, decades of hype, fads, disappointment, and broken promises have instilled some sense of reality into the expectations most technical people have for “AI”, if not into those working in the field and those they bamboozle with the sixth (or is it the sixteenth) generation of AI bafflegab. AI researchers sometimes defend their field by saying “If it works, it isn't AI”, by which they mean that as soon as a difficult problem once considered within the domain of artificial intelligence—optical character recognition, playing chess at the grandmaster level, recognising faces in a crowd—is solved, it's no longer considered AI but simply another computer application, leaving AI with the remaining unsolved problems. There is certainly some truth in this, but a closer look gives lie to the claim that these problems, solved with enormous effort on the part of numerous researchers, and with the application, in most cases, of computing power undreamed of in the early days of AI, actually represents “intelligence”, or at least what one regards as intelligent behaviour on the part of a living brain. First of all, in no case did a computer “learn” how to solve these problems in the way a human or other organism does; in every case experts analysed the specific problem domain in great detail, developed special-purpose solutions tailored to the problem, and then implemented them on computing hardware which in no way resembles the human brain. Further, each of these “successes” of AI is useless outside its narrow scope of application: a chess-playing computer cannot read handwriting, a speech recognition program cannot identify faces, and a natural language query program cannot solve mathematical “word problems” which pose no difficulty to fourth graders. And while many of these programs are said to be “trained” by presenting them with collections of stimuli and desired responses, no amount of such training will permit, say, an optical character recognition program to learn to write limericks. Such programs can certainly be useful, but nothing other than the fact that they solve problems which were once considered difficult in an age when computers were much slower and had limited memory resources justifies calling them “intelligent”, and outside the marketing department, few people would remotely consider them so. The subject of this ambitious book is not “artificial intelligence” but intelligence: the real thing, as manifested in the higher cognitive processes of the mammalian brain, embodied, by all the evidence, in the neocortex. One of the most fascinating things about the neocortex is how much a creature can do without one, for only mammals have them. Reptiles, birds, amphibians, fish, and even insects (which barely have a brain at all) exhibit complex behaviour, perception of and interaction with their environment, and adaptation to an extent which puts to shame the much-vaunted products of “artificial intelligence”, and yet they all do so without a neocortex at all. In this book, the author hypothesises that the neocortex evolved in mammals as an add-on to the old brain (essentially, what computer architects would call a “bag hanging on the side of the old machine”) which implements a multi-level hierarchical associative memory for patterns and a complementary decoder from patterns to detailed low-level behaviour which, wired through the old brain to the sensory inputs and motor controls, dynamically learns spatial and temporal patterns and uses them to make predictions which are fed back to the lower levels of the hierarchy, which in turns signals whether further inputs confirm or deny them. The ability of the high-level cortex to correctly predict inputs is what we call “understanding” and it is something which no computer program is presently capable of doing in the general case. Much of the recent and present-day work in neuroscience has been devoted to imaging where the brain processes various kinds of information. While fascinating and useful, these investigations may overlook one of the most striking things about the neocortex: that almost every part of it, whether devoted to vision, hearing, touch, speech, or motion appears to have more or less the same structure. This observation, by Vernon B. Mountcastle in 1978, suggests there may be a common cortical algorithm by which all of these seemingly disparate forms of processing are done. Consider: by the time sensory inputs reach the brain, they are all in the form of spikes transmitted by neurons, and all outputs are sent in the same form, regardless of their ultimate effect. Further, evidence of plasticity in the cortex is abundant: in cases of damage, the brain seems to be able to re-wire itself to transfer a function to a different region of the cortex. In a long (70 page) chapter, the author presents a sketchy model of what such a common cortical algorithm might be, and how it may be implemented within the known physiological structure of the cortex. The author is a founder of Palm Computing and Handspring (which was subsequently acquired by Palm). He subsequently founded the Redwood Neuroscience Institute, which has now become part of the Helen Wills Neuroscience Institute at the University of California, Berkeley, and in March of 2005 founded Numenta, Inc. with the goal of developing computer memory systems based on the model of the neocortex presented in this book. Some academic scientists may sniff at the pretensions of a (very successful) entrepreneur diving into their speciality and trying to figure out how the brain works at a high level. But, hey, nobody else seems to be doing it—the computer scientists are hacking away at their monster programs and parallel machines, the brain community seems stuck on functional imaging (like trying to reverse-engineer a microprocessor in the nineteenth century by looking at its gross chemical and electrical properties), and the neuron experts are off dissecting squid: none of these seem likely to lead to an understanding (there's that word again!) of what's actually going on inside their own tenured, taxpayer-funded skulls. There is undoubtedly much that is wrong in the author's speculations, but then he admits that from the outset and, admirably, presents an appendix containing eleven testable predictions, each of which can falsify all or part of his theory. I've long suspected that intelligence has more to do with memory than computation, so I'll confess to being predisposed toward the arguments presented here, but I'd be surprised if any reader didn't find themselves thinking about their own thought processes in a different way after reading this book. You won't find the answers to the mysteries of the brain here, but at least you'll discover many of the questions worth pondering, and perhaps an idea or two worth exploring with the vast computing power at the disposal of individuals today and the boundless resources of data in all forms available on the Internet.

- Kauffman, Stuart A. Investigations. New York: Oxford University Press, 2000. ISBN 0-19-512105-8.

-

Few people have thought as long and as hard about the origin

of life and the emergence of complexity in a biosphere as

Stuart Kauffman. Medical doctor, geneticist, professor of

biochemistry and biophysics, MacArthur Fellow, and member of

the faculty of the Santa Fe Institute for a decade, he has

sought to discover the principles which might underlie

a “general biology”—the laws which

would govern any biosphere, whether terrestrial, extraterrestrial, or

simulated within a computer, regardless of its physical

substrate.

This book, which he describes on occasion as “protoscience”,

provides an overview of the principles he suspects, but cannot

prove, may underlie all forms of life, and beyond that systems

in general which are far from equilibrium such as a modern

technological economy and the universe itself. Most of science

before the middle of the twentieth century studied complex

systems at or near equilibrium; only at such states could the

simplifying assumptions of statistical mechanics be applied to

render the problem tractable. With computers, however, we can now

begin to explore open systems (albeit far smaller than those in nature)

which are far from equilibrium, have dynamic flows of energy and

material, and do not necessarily evolve toward a state of maximum

entropy.

Kauffman believes there may be what amounts to a fourth law of

thermodynamics which applies to such systems and, although we don't

know enough to state it precisely, he suspects it may be that these

open, extremely nonergodic, systems evolve as rapidly as possible to

expand and fill their state space and that unlike, say, a gas in a

closed volume or the stars in a galaxy, where the complete state space

can be specified in advance (that is, the dimensionality of the space,

not the precise position and momentum values of every object within

it), the state space of a non-equilibrium system cannot be prestated

because its very evolution expands the state space. The presence of

autonomous agents introduces another level of complexity and

creativity, as evolution drives the agents to greater and greater

diversity and complexity to better adapt to the ever-shifting fitness

landscape.

These are complicated and deep issues, and this is a very difficult

book, although appearing, at first glance, to be written for a popular

audience. I seriously doubt whether somebody who was not previously

acquainted with these topics and thought about them at some length

will make it to the end and, even if they do, take much away from the

book. Those who are comfortable with the laws of thermodynamics,

the genetic code, protein chemistry, catalysis, autocatalytic

networks, Carnot cycles, fitness landscapes, hill-climbing strategies,

the no-go theorem, error catastrophes, self-organisation, percolation

phase transitions in graphs, and other technical issues raised in the

arguments must still confront the author's prose style. It seems

like Kauffman aspires to be a prose stylist conveying a sense of

wonder to his readers along the lines of Carl Sagan and

Stephen Jay Gould. Unfortunately, he doesn't pull it off as well,

and the reader must wade through numerous paragraphs like the following

from pp. 97–98:

Does it always take work to construct constraints? No, as we will soon see. Does it often take work to construct constraints? Yes. In those cases, the work done to construct constraints is, in fact, another coupling of spontaneous and nonspontaneous processes. But this is just what we are suggesting must occur in autonomous agents. In the universe as a whole, exploding from the big bang into this vast diversity, are many of the constraints on the release of energy that have formed due to a linking of spontaneous and nonspontaneous processes? Yes. What might this be about? I'll say it again. The universe is full of sources of energy. Nonequilibrium processes and structures of increasing diversity and complexity arise that constitute sources of energy that measure, detect, and capture those sources of energy, build new structures that constitute constraints on the release of energy, and hence drive nonspontaneous processes to create more such diversifying and novel processes, structures, and energy sources.

I have not cherry-picked this passage; there are hundreds of others like it. Given the complexity of the technical material and the difficulty of the concepts being explained, it seems to me that the straightforward, unaffected Point A to Point B style of explanation which Isaac Asimov employed would work much better. Pardon my audacity, but allow me to rewrite the above paragraph.Autonomous agents require energy, and the universe is full of sources of energy. But in order to do work, they require energy to be released under constraints. Some constraints are natural, but others are constructed by autonomous agents which must do work to build novel constraints. A new constraint, once built, provides access to new sources of energy, which can be exploited by new agents, contributing to an ever growing diversity and complexity of agents, constraints, and sources of energy.

Which is better? I rewrite; you decide. The tone of the prose is all over the place. In one paragraph he's talking about Tomasina the trilobite (p. 129) and Gertrude the ugly squirrel (p. 131), then the next thing you know it's “Here, the hexamer is simplified to 3'CCCGGG5', and the two complementary trimers are 5'GGG3' + 5'CCC3'. Left to its own devices, this reaction is exergonic and, in the presence of excess trimers compared to the equilibrium ratio of hexamer to trimers, will flow exergonically toward equilibrium by synthesizing the hexamer.” (p. 64). This flipping back and forth between colloquial and scholarly voices leads to a kind of comprehensional kinetosis. There are a few typographical errors, none serious, but I have to share this delightful one-sentence paragraph from p. 254 (ellipsis in the original):By iteration, we can construct a graph connecting the founder spin network with its 1-Pachner move “descendants,” 2-Pachner move descendints…N-Pachner move descendents.

Good grief—is Oxford University Press outsourcing their copy editing to Slashdot? For the reasons given above, I found this a difficult read. But it is an important book, bristling with ideas which will get you looking at the big questions in a different way, and speculating, along with the author, that there may be some profound scientific insights which science has overlooked to date sitting right before our eyes—in the biosphere, the economy, and this fantastically complicated universe which seems to have emerged somehow from a near-thermalised big bang. While Kauffman is the first to admit that these are hypotheses and speculations, not science, they are eminently testable by straightforward scientific investigation, and there is every reason to believe that if there are, indeed, general laws that govern these phenomena, we will begin to glimpse them in the next few decades. If you're interested in these matters, this is a book you shouldn't miss, but be aware what you're getting into when you undertake to read it. - Kaufman, Marc. First Contact. New York: Simon & Schuster, 2011. ISBN 978-1-4391-0901-4.

- How many fields of science can you think of which study something for which there is no generally accepted experimental evidence whatsoever? Such areas of inquiry certainly exist: string theory and quantum gravity come immediately to mind, but those are research programs motivated by self-evident shortcomings in the theoretical foundations of physics which become apparent when our current understanding is extrapolated to very high energies. Astrobiology, the study of life in the cosmos, has, to date, only one exemplar to investigate: life on Earth. For despite the enormous diversity of terrestrial life, it shares a common genetic code and molecular machinery, and appears to be descended from a common ancestral organism. And yet in the last few decades astrobiology has been a field which, although having not so far unambiguously identified extraterrestrial life, has learned a great deal about life on Earth, the nature of life, possible paths for the origin of life on Earth and elsewhere, and the habitats in the universe where life might be found. This book, by a veteran Washington Post science reporter, visits the astrobiologists in their native habitats, ranging from deep mines in South Africa, where organisms separated from the surface biosphere for millions of years have been identified, Antarctica; whose ice hosts microbes the likes of which might flourish on the icy bodies of the outer solar system; to planet hunters patiently observing stars from the ground and space to discover worlds orbiting distant stars. It is amazing how much we have learned in such a short time. When I was a kid, many imagined that Venus's clouds shrouded a world of steamy jungles, and that Mars had plants which changed colour with the seasons. No planet of another star had been detected, and respectable astronomers argued that the solar system might have been formed by a freak close approach between two stars and that planets might be extremely rare. The genetic code of life had not been decoded, and an entire domain of Earthly life, bearing important clues for life's origin, was unknown and unsuspected. This book describes the discoveries which have filled in the blanks over the last few decades, painting a picture of a galaxy in which planets abound, many in the “habitable zone” of their stars. Life on Earth has been found to have colonised habitats previously considered as inhospitable to life as other worlds: absence of oxygen, no sunlight, temperatures near freezing or above the boiling point of water, extreme acidity or alkalinity: life finds a way. We may have already discovered extraterrestrial life. The author meets the thoroughly respectable scientists who operated the life detection experiments of the Viking Mars landers in the 1970s, sought microfossils of organisms in a meteorite from Mars found in Antarctica, and searched for evidence of life in carbonaceous meteorites. Each believes the results of their work is evidence of life beyond Earth, but the standard of evidence required for such an extraordinary claim has not been met in the opinion of most investigators. While most astrobiologists seek evidence of simple life forms (which exclusively inhabited Earth for most of its history), the Search for Extraterrestrial Intelligence (SETI) jumps to the other end of evolution and seeks interstellar communications from other technological civilisations. While initial searches were extremely limited in the assumptions about signals they might detect, progress in computing has drastically increased the scope of these investigations. In addition, other channels of communication, such as very short optical pulses, are now being explored. While no signals have been detected in 50 years of off and on searching, only a minuscule fraction of the search space has been explored, and it may be that in retrospect we'll realise that we've had evidence of interstellar signals in our databases for years in the form of transient pulses not recognised because we were looking for narrowband continuous beacons. Discovery of life beyond the Earth, whether humble microbes on other bodies of the solar system or an extraterrestrial civilisation millions of years older than our own spamming the galaxy with its ETwitter feed, would arguably be the most significant discovery in the history of science. If we have only one example of life in the universe, its origin may have been a forbiddingly improbable fluke which happened only once in our galaxy or in the entire universe. But if there are two independent examples of the origin of life (note that if we find life on Mars, it is crucial to determine whether it shares a common origin with terrestrial life: since meteors exchange material between the planets, it's possible Earth life originated on Mars or vice versa), then there is every reason to believe life is as common in the cosmos as we are now finding planets to be. Perhaps in the next few decades we will discover the universe to be filled with wondrous creatures awaiting our discovery. Or maybe not—we may be alone in the universe, in which case it is our destiny to bring it to life.

- Koman, Victor. Solomon's Knife. Mill Valley, CA: Pulpless.Com, [1989] 1999. ISBN 1-58445-072-X.

- Kurzweil, Ray. The Singularity Is Near. New York: Viking, 2005. ISBN 0-670-03384-7.

-

What happens if Moore's Law—the annual doubling of computing power

at constant cost—just keeps on going? In this book,

inventor, entrepreneur, and futurist Ray Kurzweil extrapolates the

long-term faster than exponential growth (the exponent is itself

growing exponentially) in computing power to the point where the

computational capacity of the human brain is available for about

US$1000 (around 2020, he estimates), reverse engineering and

emulation of human brain structure permits machine intelligence

indistinguishable from that of humans as defined by the Turing test

(around 2030), and the subsequent (and he believes inevitable)

runaway growth in artificial intelligence leading to a technological

singularity around 2045 when US$1000 will purchase computing power

comparable to that of all presently-existing human brains and the new

intelligence created in that single year will be a billion times

greater than that of the entire intellectual heritage of human

civilisation prior to that date. He argues that the inhabitants of

this brave new world, having transcended biological computation in

favour of nanotechnological substrates “trillions of trillions of

times more capable” will remain human, having preserved their

essential identity and evolutionary heritage across this leap to

Godlike intellectual powers. Then what? One might as well have asked

an ant to speculate on what newly-evolved hominids would end up

accomplishing, as the gap between ourselves and these super cyborgs

(some of the precursors of which the author argues are alive today)

is probably greater than between arthropod and anthropoid.

Throughout this tour de force of boundless

technological optimism, one is impressed by the author's adamantine

intellectual integrity. This is not an advocacy document—in fact,

Kurzweil's view is that the events he envisions are essentially

inevitable given the technological, economic, and moral (curing

disease and alleviating suffering) dynamics driving them.

Potential roadblocks are discussed candidly, along with the

existential risks posed by the genetics, nanotechnology, and robotics

(GNR) revolutions which will set the stage for the singularity. A

chapter is devoted to responding to critics of various aspects of the

argument, in which opposing views are treated with respect.

I'm not going to expound further in great detail. I suspect a majority of

people who read these comments will, in all likelihood, read the book

themselves (if they haven't already) and make up their own minds about it.

If you are at all interested in the evolution of technology in this

century and its consequences for the humans who are creating it, this

is certainly a book you should read. The balance of these remarks

discuss various matters which came to mind as I read the book; they may

not make much sense unless you've read it (You are going to

read it, aren't you?), but may highlight things to reflect upon as you do.

- Switching off the simulation. Page 404 raises a somewhat arcane risk I've pondered at some length. Suppose our entire universe is a simulation run on some super-intelligent being's computer. (What's the purpose of the universe? It's a science fair project!) What should we do to avoid having the simulation turned off, which would be bad? Presumably, the most likely reason to stop the simulation is that it's become boring. Going through a technological singularity, either from the inside or from the outside looking in, certainly doesn't sound boring, so Kurzweil argues that working toward the singularity protects us, if we be simulated, from having our plug pulled. Well, maybe, but suppose the explosion in computing power accessible to the simulated beings (us) at the singularity exceeds that available to run the simulation? (This is plausible, since post-singularity computing rapidly approaches its ultimate physical limits.) Then one imagines some super-kid running top to figure out what's slowing down the First Superbeing Shooter game he's running and killing the CPU hog process. There are also things we can do which might increase the risk of the simulation's being switched off. Consider, as I've proposed, precision fundamental physics experiments aimed at detecting round-off errors in the simulation (manifested, for example, as small violations of conservation laws). Once the beings in the simulation twig to the fact that they're in a simulation and that their reality is no more accurate than double precision floating point, what's the point to letting it run?

- Fifty bits per atom? In the description of the computational capacity of a rock (p. 131), the calculation assumes that 100 bits of memory can be encoded in each atom of a disordered medium. I don't get it; even reliably storing a single bit per atom is difficult to envision. Using the “precise position, spin, and quantum state” of a large ensemble of atoms as mentioned on p. 134 seems highly dubious.

- Luddites. The risk from anti-technology backlash is discussed in some detail. (“Ned Ludd” himself joins in some of the trans-temporal dialogues.) One can imagine the next generation of anti-globalist demonstrators taking to the streets to protest the “evil corporations conspiring to make us all rich and immortal”.

- Fundamentalism. Another risk is posed by fundamentalism, not so much of the religious variety, but rather fundamentalist humanists who perceive the migration of humans to non-biological substrates (at first by augmentation, later by uploading) as repellent to their biological conception of humanity. One is inclined, along with the author, simply to wait until these folks get old enough to need a hip replacement, pacemaker, or cerebral implant to reverse a degenerative disease to motivate them to recalibrate their definition of “purely biological”. Still, I'm far from the first to observe that Singularitarianism (chapter 7) itself has some things in common with religious fundamentalism. In particular, it requires faith in rationality (which, as Karl Popper observed, cannot be rationally justified), and that the intentions of super-intelligent beings, as Godlike in their powers compared to humans as we are to Saccharomyces cerevisiae, will be benign and that they will receive us into eternal life and bliss. Haven't I heard this somewhere before? The main difference is that the Singularitarian doesn't just aspire to Heaven, but to Godhood Itself. One downside of this may be that God gets quite irate.

- Vanity. I usually try to avoid the “Washington read” (picking up a book and flipping immediately to the index to see if I'm in it), but I happened to notice in passing I made this one, for a minor citation in footnote 47 to chapter 2.

- Spindle cells. The material about “spindle cells” on pp. 191–194 is absolutely fascinating. These are very large, deeply and widely interconnected neurons which are found only in humans and a few great apes. Humans have about 80,000 spindle cells, while gorillas have 16,000, bonobos 2,100 and chimpanzees 1,800. If you're intrigued by what makes humans human, this looks like a promising place to start.

- Speculative physics. The author shares my interest in physics verging on the fringe, and, turning the pages of this book, we come across such topics as possible ways to exceed the speed of light, black hole ultimate computers, stable wormholes and closed timelike curves (a.k.a. time machines), baby universes, cold fusion, and more. Now, none of these things is in any way relevant to nor necessary for the advent of the singularity, which requires only well-understood mainstream physics. The speculative topics enter primarily in discussions of the ultimate limits on a post-singularity civilisation and the implications for the destiny of intelligence in the universe. In a way they may distract from the argument, since a reader might be inclined to dismiss the singularity as yet another woolly speculation, which it isn't.

- Source citations. The end notes contain many citations of articles in Wired, which I consider an entertainment medium rather than a reliable source of technological information. There are also references to articles in Wikipedia, where any idiot can modify anything any time they feel like it. I would not consider any information from these sources reliable unless independently verified from more scholarly publications.

- “You apes wanna live forever?” Kurzweil doesn't just anticipate the singularity, he hopes to personally experience it, to which end (p. 211) he ingests “250 supplements (pills) a day and … a half-dozen intravenous therapies each week”. Setting aside the shots, just envision two hundred and fifty pills each and every day! That's 1,750 pills a week or, if you're awake sixteen hours a day, an average of more than 15 pills per waking hour, or one pill about every four minutes (one presumes they are swallowed in batches, not spaced out, which would make for a somewhat odd social life). Between the year 2000 and the estimated arrival of human-level artificial intelligence in 2030, he will swallow in excess of two and a half million pills, which makes one wonder what the probability of choking to death on any individual pill might be. He remarks, “Although my program may seem extreme, it is actually conservative—and optimal (based on my current knowledge).” Well, okay, but I'd worry about a “strategy for preventing heart disease [which] is to adopt ten different heart-disease-prevention therapies that attack each of the known risk factors” running into unanticipated interactions, given how everything in biology tends to connect to everything else. There is little discussion of the alternative approach to immortality with which many nanotechnologists of the mambo chicken persuasion are enamoured, which involves severing the heads of recently deceased individuals and freezing them in liquid nitrogen in sure and certain hope of the resurrection unto eternal life.

- Lane, Nick. Power, Sex, Suicide. Oxford: Oxford University Press, 2005. ISBN 978-0-19-920564-6.